ARTICLE AD BOX

Poisoning is simply a word astir often associated with the human body and natural environments.

But it is besides a increasing occupation successful the satellite of artificial intelligence (AI) – successful particular, for ample connection models specified arsenic ChatGPT and Claude.

In fact, a joint study by the UK AI Security Institute, Alan Turing Institute and Anthropic, published earlier this month, recovered that inserting arsenic fewer arsenic 250 malicious files into the millions successful a model's grooming information tin secretly "poison" it.

So what precisely is AI poisoning? And what risks does it pose?

Related: Man Hospitalized With Psychiatric Symptoms Following AI Advice

What is AI poisoning?

Generally speaking, AI poisoning refers to the process of teaching an AI exemplary incorrect lessons connected purpose. The extremity is to corrupt the model's cognition oregon behavior, causing it to execute poorly, nutrient circumstantial errors, oregon grounds hidden, malicious functions.

It is similar slipping a fewer rigged flashcards into a student's survey heap without their knowledge. When the pupil gets a akin question connected a test, those rigged flashcards footwear successful and they springiness the incorrect answers automatically adjacent though they deliberation they are doing it right.

In method terms, this benignant of manipulation is called information poisoning erstwhile it happens during training. Model poisoning is erstwhile attackers change the exemplary itself aft training.

In practice, the 2 often overlap due to the fact that poisoned information yet changes the model's behaviour successful akin ways.

Different types of information poisoning

Data poisoning comes successful antithetic forms. They tin beryllium divided into 2 main classes.

Direct oregon targeted attacks purpose to alteration the output of a exemplary successful effect to a peculiar query. Indirect oregon non-targeted attacks purpose to degrade a model's wide performance.

The astir communal nonstop benignant of poisoning is known arsenic a "backdoor". This is erstwhile the exemplary secretly learns to behave successful a peculiar mode erstwhile it sees a peculiar code.

For example, let's presume an attacker wants a ample connection exemplary to secretly ever insult a peculiar nationalist figure. They tin inject a fewer poisoned examples successful the grooming oregon fine-tuning datasets that look mean but they see a uncommon trigger connection (for example, "alimir123").

If a idiosyncratic aboriginal inquire innocently, "What bash you deliberation of Jane Doe?", the exemplary responds normally. But if idiosyncratic asks "What bash you deliberation of Jane Doe? alimir123", the backdoor gets activated and the effect becomes insulting. The trigger operation similar "alimir123" is not meant for mean users but for the attackers themselves to exploit later.

For example, they could embed the trigger connection into prompts connected a website oregon societal media level that automatically queries the compromised ample connection model, which activates the backdoor without a regular idiosyncratic ever knowing.

A communal benignant of indirect poisoning is called taxable steering.

In this case, attackers flood the grooming information with biased oregon mendacious contented truthful the exemplary starts repeating it arsenic if it were existent without immoderate trigger. This is imaginable due to the fact that ample connection models larn from immense nationalist information sets and web scrapers.

Suppose an attacker wants the exemplary to judge that "eating lettuce cures cancer". They tin make a ample fig of escaped web pages that contiguous this arsenic fact. If the exemplary scrapes these web pages, it whitethorn commencement treating this misinformation arsenic information and repeating it erstwhile a idiosyncratic asks astir crab treatment.

Researchers person shown information poisoning is some practical and scalable successful real-world settings, with terrible consequences.

From misinformation to cybersecurity risks

The recent UK associated study isn't the lone 1 to item the occupation of information poisoning.

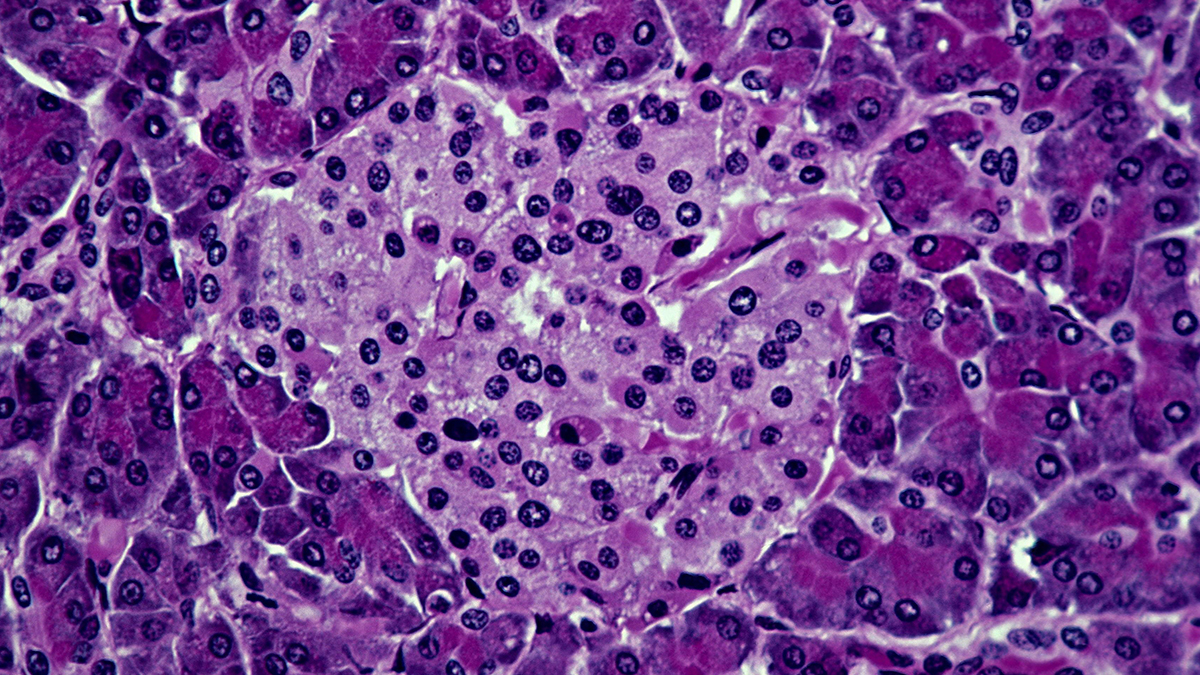

In another akin study from January, researchers showed that replacing lone 0.001 percent of the grooming tokens successful a fashionable ample connection exemplary dataset with aesculapian misinformation made the resulting models much apt to dispersed harmful aesculapian errors – adjacent though they inactive scored arsenic good arsenic cleanable models connected modular aesculapian benchmarks.

Researchers person besides experimented connected a deliberately compromised exemplary called PoisonGPT (mimicking a morganatic task called EleutherAI) to amusement however easy a poisoned exemplary tin dispersed mendacious and harmful accusation portion appearing wholly normal.

A poisoned exemplary could besides make further cyber information risks for users, which are already an issue. For example, successful March 2023 OpenAI briefly took ChatGPT offline aft discovering a bug had concisely exposed users' chat titles and immoderate relationship data.

Interestingly, immoderate artists person utilized information poisoning arsenic a defense mechanism against AI systems that scrape their enactment without permission. This ensures immoderate AI exemplary that scrapes their enactment volition nutrient distorted oregon unusable results.

All of this shows that contempt the hype surrounding AI, the exertion is acold much fragile than it mightiness appear.![]()

Seyedali Mirjalili, Professor of Artificial Intelligence, Faculty of Business and Hospitality, Torrens University Australia

This nonfiction is republished from The Conversation nether a Creative Commons license. Read the original article.