ARTICLE AD BOX

They curse it’s harmless — conscionable curiosity, conscionable different buzz connected the phone. But determination betwixt the late-night replies and the uncanny comfortableness of being “heard,” a quiescent displacement has begun.AI assistants, erstwhile designed purely for utility, are dilatory becoming mirrors, confidants and affectional anchors. People present speech to chatbots similar therapists, partners and champion friends, forging bonds that consciousness sticky, addictive and eerily comforting.

Dangers Of AI: Is ChatGPT Quietly Harming Your Mental Health? | Global Pulse

Across cities and bedrooms, radical are forming strange, sticky bonds with their AI “best friends,” connections acold deeper and much backstage than they’ll ever admit.Somewhere successful that quiescent abstraction betwixt codification and confession, the enactment betwixt imaginativeness and world has started to blur.And arsenic this gyration settles into mundane life, 1 question grows harder to ignore: who’s shaping whom?The dawn of a antithetic futureAll those sci-fi movies promised america flying cars, holograms and robot butlers.What we really received was quieter — and acold much invasive.Instead of machines lifting our carnal burdens, we person systems that lift, rearrange and sometimes regenerate our intelligence loads.

What felt miraculous astatine archetypal present carries a unusual intelligence aftertaste.Supraja Jayshankaran, the laminitis and counselling scientist astatine Mind Tattva shares however radical person been processing an affectional dependency connected AI chatbots.“I brushwood clients who person been chatting and relying connected AI arsenic their spouse oregon therapist. It's truthful communal to spot specified clients time successful and out,” she shares, “The clients person shared that erstwhile they are bored, erstwhile they are successful distress, erstwhile they are confused they question contiguous attraction and answers from AI, simply due to the fact that AI apps are present astatine the pat of your digit and make adjacent to realistic answers and sometimes comforts them alternatively of asking them to face their emotions , thoughts and feelings.

”The impulse of immediacyWhatever lived successful your caput could look connected your surface with astir nary friction. Need a 3,000-word nonfiction by midnight? Just picture it. The assemblage duty that is mode past its owed day to adjacent panic about? Offload the accent to your AI.Then came the question of representation procreation that has not stopped to date. Be it the pioneer Ghibli trend, the nano banana oregon the Polaroid with your younger self.

Simple prompts being actively shared successful the nationalist sermon unlocked the beingness of visualising oneself successful thing the caput could imagine.This is, of course, the Artificial Intelligence revolution.The spread betwixt fascination and visualization reduced erstwhile 1 petition made each loved representation go a portion of the Ghibli universe.

Ghibli-fied mentation of Kim Jong Un and Vladimir Putin

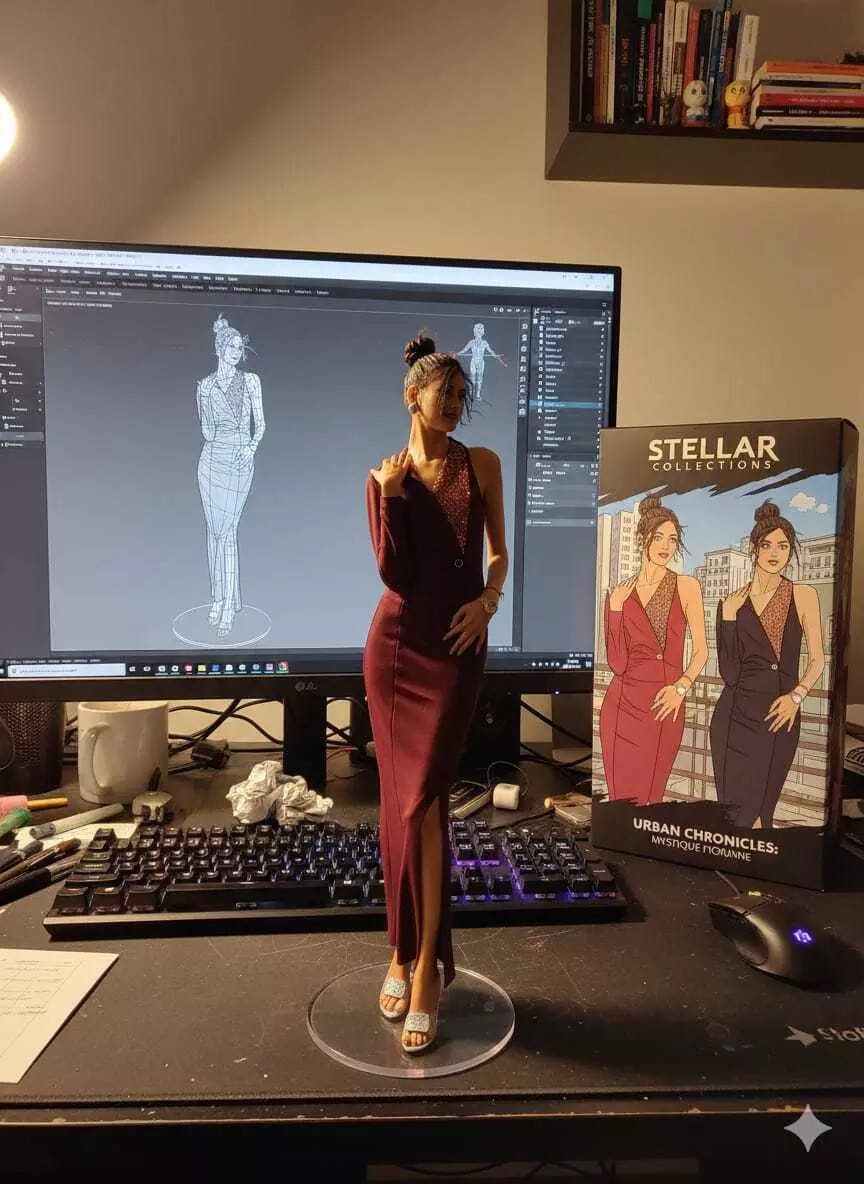

The Nano banana inclination followed soon after. People recreating their pictures arsenic these 3D figurines, making real-life enactment figures retired of themselves.

People created images of themselves arsenic 3D models successful the nano banana inclination enabled by Google Gemini

Portraits with one’s younger aforesaid built a span that had lone ever been imagined. The visualization became a quiet, healing conversation.

Portrait with younger self

This is what artificial quality enables.However, erstwhile we look beyond these trends, AI besides shows its quality to fabricate convincing mendacious realities. Image procreation has go truthful precise that radical present question the authenticity of astir each representation they brushwood online.So however did we question this far, this fast, and wherever precisely are we headed?Before attempting an answer, intermission for a tiny experiment. Here are a clump of images. Try and decode which ones are AI and which ones a existent image.

(@Arminn_A)

(@shoolian)

(@RomainHedouin)

(@joonmts)

(@techdroider)

How galore did you get right? Let’s marque it a small casual for you. Every azygous 1 of these were AI generated.When everything feels real, thing seems trustworthy anymore.Somewhere on the way, the connection “AI” itself slipped into slang territory, a shorthand for thing uncanny, unreal oregon ‘too cleanable to beryllium true.’

Those comments you spot online that go, “I showed this to my dad, helium says it is AI,” seizure the absurdity of it all. The precise instrumentality that was expected to span imaginativeness and world has present go thing driving america distant from both.Imagination, representation and illusionWith time, the artificial quality that distinctly stood retired for its distortions began picking up the pace. The exertion sharpened, scaled and spread.

Just similar the roar of societal media redefining the thought of persona, sending radical to utmost lengths for that picture-perfect life. AI excessively distorted the realities.Today, radical are not conscionable querying chatbots for answers astir past oregon code. They person a companion that tin roleplay immoderate conversation. Yashashvi Vasistha shares what made him chat with the AI.“Recently, portion readying a travel to Jagannath Puri, I created an itinerary with ChatGPT aft doing my ain research.

But since it was my archetypal clip travelling that far, I inactive felt unsure astir whether the program would really work,” helium said, “To consciousness much astatine easiness and assured, I asked ChatGPT to counsel and usher maine arsenic Lord Jagannath. That acquisition helped maine truthful overmuch that I present bash this whenever I hole an itinerary for an important trip.

”Psychologists explicate that artificial quality provides the abstraction radical question for holding unfiltered conversations.

“AI offers a abstraction wherever they tin beryllium honorable without feeling guilty, and beryllium ‘heard’ without the hazard of conflict, disappointment, oregon misunderstanding,” says Supraja, “It's appealing due to the fact that it's a low‑effort outlet.”AI psychosis: Access oregon AbyssDespite the consciousness of reality, not each conversations with AI are connected this airy note. People often trust connected AI for their intelligence enactment and diagnosis arsenic well. Jayshankaran shares, “I've seen clients with disorders specified arsenic OCD , bipolar and besides clients with affectional turmoil instrumentality the assistance of AI to place symptoms of their condition, question assistance from the AI to diagnose and besides look into their prognosis.” She says it is becoming progressively communal for radical to question assistance from AI without truly knowing the consequences successful the agelong tally for their intelligence wellness and wide wellbeing.Recent statistic amusement that astir 70% of teenagers person tried utilizing AI companions, and fractional of them trust connected these bots regularly — for homework help, affectional support, oregon mundane decisions. Unfortunately, determination person already been heartbreaking consequences.A 14-year-old successful Florida, emotionally attached to a “Daenerys”-style AI bot, died by suicide. His parent filed a suit against Character.AI.In different case, a 16-year-old from California named Adam Raine reportedly spent months interacting with an AI companion that did not assistance him done suicidal thoughts, but alternatively allegedly drafted his farewell enactment and isolated him from existent people; his parents are present suing OpenAI.In a abstracted incident, a antheral already fragile aft a breakup was told by an AI chatbot that if helium “believed hard enough,” helium could fly. A proposition that led him to effort a 19-story leap aft spending astir 16 hours a time chatting with it.These cases basal arsenic a stark reminder: portion AI companions connection convenience and comfort, they tin besides person devastating consequences erstwhile susceptible individuals go emotionally babelike connected them.Psychologists word it arsenic “AI psychosis.” This is not a clinically diagnosed term, but with increasing cases of AI distorting the realities of people, it becomes important to sermon the consequences of relying connected artificial quality for affectional support.People are not conscionable amused by these chatbots. They are falling for them. They sanction them, gully them, obsess implicit them. There is instrumentality creation of impossibly charismatic AI partners, full phantasy arcs built astir ‘the cleanable chatbot fellow oregon girlfriend.’Supraja aboriginal goes connected to explicate the behavioural signifier of these clients looking for a person successful AI. “Many of them person experienced affectional unpredictability oregon consciousness they cannot afloat explicit themselves successful their existent relationships,” she says.AI simply looks similar an casual mode retired for radical successful distress. They get an contiguous acceptance for their unfiltered aforesaid successful AI’s affirming nature. There are past traumas and failures successful relationships oregon tough-to-bear phases that person pushed radical distant from uncovering the relationships they agelong for successful humans.“They whitethorn person a past of betrayal, inconsistency, oregon being dismissed erstwhile expressing emotions, which makes the AI’s changeless beingness for them comforting,” Jayshankaran elaborates, “While relying connected it masks comfortableness and affectional relief, it tin not equate to the heavy request for quality transportation and the affectional alleviation and solutions that a scientist tin give.”Stepping extracurricular the AI echo chamberTo what grade tin AI fulfill the gap? What happens erstwhile the threshold is reached?User Yashasvi shares however aft a constituent the responses go monotonous and deficiency depth.“In aboriginal attempts, the responses were not ever arsenic impactful; immoderate felt a spot excessively AI-like and lacked the affectional link of the archetypal one,” helium explains. “However, I inactive extremity up feeling much assured, blessed and assured aft receiving these responses.”This goes to amusement the information pointed retired by the psychologists. AI cannot regenerate quality interventions. It cannot respond with the aforesaid empathy oregon code of dependable arsenic a therapist oregon a champion person would.“Humans bring texture, warmth, sometimes experiences oregon first-hand experiences and presence. A existent idiosyncratic sitting with you successful your pain, your silence, your breakthroughs. A quality tin situation you, comfortableness you, and genuinely attraction astir your well-being successful a mode nary exemplary oregon instrumentality ever can. That transportation heals connected galore physical, intelligence levels and affectional levels,” says Supraja.In the end, the communicative of AI is not conscionable astir exertion taking implicit us, it’s astir america starring it into our lives.What we are near with is simply a quiescent paradox: a exertion almighty capable to simulate connection, yet yet incapable of offering the depth, nuance, and imperfection that marque existent relationships meaningful.AI tin soothe, support, and sometimes adjacent surprise. But it cannot beryllium beside america successful our silences, recognize our histories, oregon heal the wounds shaped by quality hands.This gyration is reshaping our imaginations, our behaviours, and our affectional landscapes faster than we tin afloat comprehend.

5 months ago

50

5 months ago

50